The Next Fashion Interface Isn't a Search Bar

It's the Device You're Already Wearing

April 2026

Here's what's happening right now in fashion and AI. Daydream just raised $50 million. Amazon Rufus handled 250 million shoppers last year. Google built agentic checkout into Shopping. OpenAI put purchase flows inside ChatGPT. Everybody — and I mean everybody — is racing to build a smarter way to search for clothes.

And they're all solving the wrong problem.

Fashion isn't a search problem. It never was. It's a taste problem. And the tools being built right now — the agents, the recommendation engines, the algorithmic feeds — they're actually making taste worse.

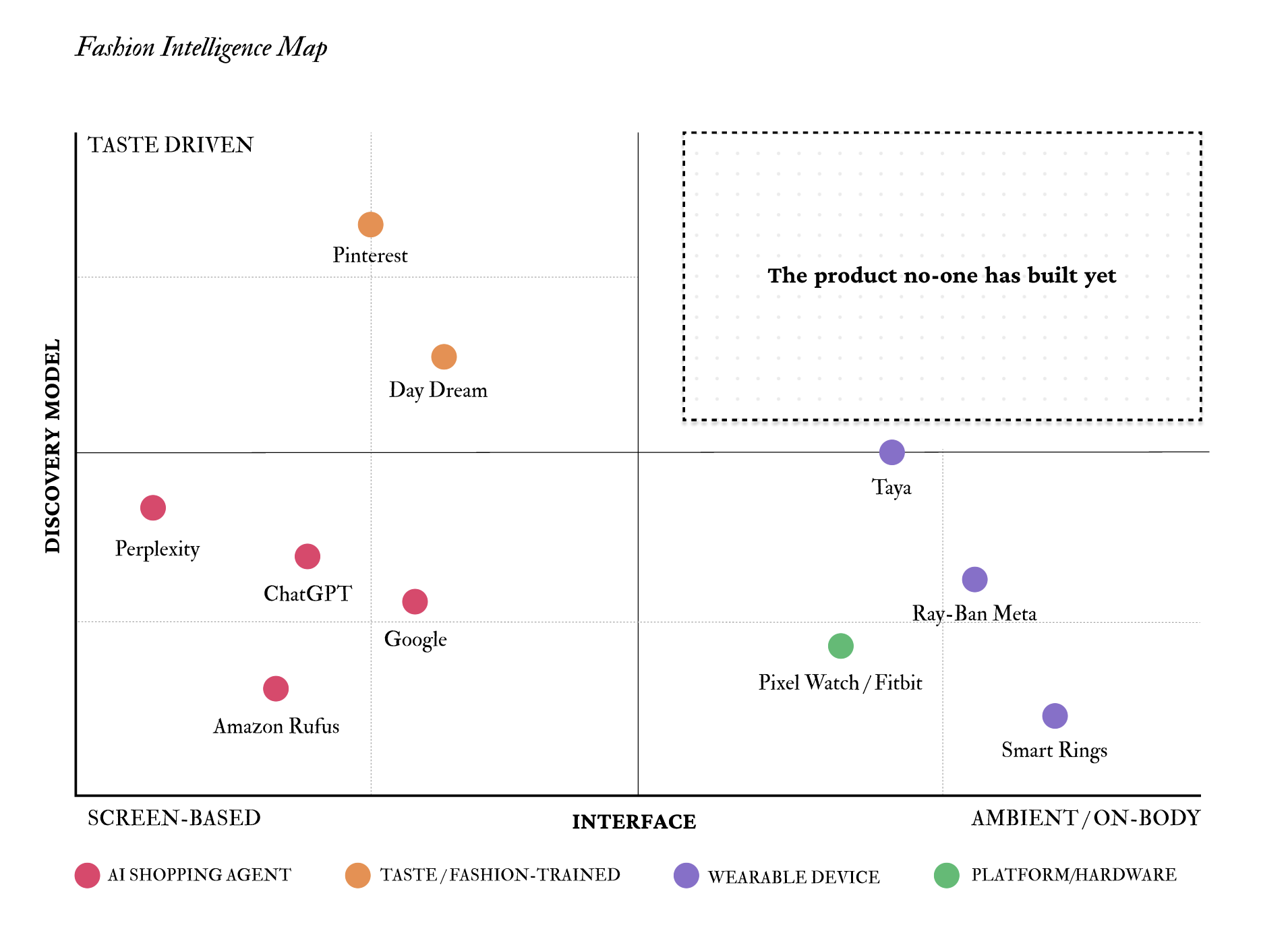

Here's what you're looking at. I mapped ten companies across two axes — how they help you discover things (algorithm-driven vs. taste-driven) and where that discovery happens (on a screen vs. on your body). The left side is packed. The right side is growing. And the upper-right corner — the quadrant where taste-driven discovery meets a device you're actually wearing — is completely empty.

That's the story. Let me walk you through why.

The left side is an arms race

PYMNTS tested the big AI shopping agents this year. They called it the "Toaster Test." Their line was: "What is billed as a revolution in commerce is, for now, mostly a highly intelligent search bar." Billions of dollars in AI investment. And we got a better search bar.

But the real issue isn't that the tools are bad at shopping. It's what they do to taste. Researchers call it the algorithmic taste cage. You click on three black sweaters. Now all you see is black sweaters. Forever. The algorithm doesn't challenge you. It doesn't surprise you. It feeds you more of what you already clicked on and calls it personalization.

But fashion has never worked that way. Fashion is rebellion. It's the thing you didn't know you wanted until you saw it on someone walking down the street. An algorithm that optimizes for what you already like is the opposite of style. It's a cage. And people are starting to feel it — Pinterest published data showing 69% of Gen Z wants AI that improves their life, not keeps them scrolling. They want their taste back.

So what does every major shopping agent do? Doubles down. Same playbook: learn what you like, show you more of it, make buying frictionless. Optimize for conversion. Nobody's optimizing for taste.

One company almost gets it

The exception is Daydream. Julie Bornstein built Nordstrom's e-commerce, scaled Stitch Fix, sold The Yes to Pinterest — she knows fashion technology better than almost anyone alive. She built Daydream around AI that actually understands cut, occasion, color, the real nuances of how people dress. Time Magazine called it one of the Best Inventions of 2025.

But here's the thing. Even Daydream lives in a chat window. You open an app. You type. You scroll. It's a much better search experience. But it's still a search experience. The screen is the ceiling.

The hardware side is doing something nobody expected

While everyone fights over the search bar, wearable devices are becoming things people actually want to put on their body.

Ray-Ban Meta glasses? Top-selling item at 60% of Ray-Ban stores across Europe and the Middle East. Meta just signed a 10-year lease on Fifth Avenue. Taya — this AI necklace from a former Apple engineer — sold out its first run with zero paid marketing and 3 million organic views. Smart rings broke out at CES. Turns out, make it look like fashion instead of tech, and people will actually wear it.

Now think about what these devices know about you. They know what you wear. Where you go. How you move. What catches your eye. Ray-Ban Meta has a camera and Llama 4. Taya listens and learns your patterns. Smart rings track your body around the clock.

That's more about you than your phone has ever known. And not a single one of these devices is connected to commerce. Not one.

So why is that quadrant empty?

Because building it requires three things nobody has combined. You need AI that actually understands fashion — real taste, not just product matching. You need hardware on someone's body, reading context as it happens. And you need a point of view about what the whole thing is for. Not to narrow what people buy. To expand what they discover.

That's what makes this interesting. It's not a feature someone forgot to build. It's a fundamentally different product. Something that lives on you, knows your context the way a great stylist does, and shows you things you would never have found yourself.

Google has every piece of this

Here's what's interesting about Google specifically. They already own everything you'd need to build this.

They own the hardware — Pixel Watch, Fitbit, new devices coming this year. Gemini is already running health coaching on those watches. And Google Shopping has agentic checkout built in. This year they announced the Universal Commerce Protocol, which connects AI agents directly to retailer inventory.

Every piece is there. What's missing is the connection. The wearable team builds health products. The commerce team builds shopping tools. The AI team builds models. Nobody inside Google is building the intersection. Nobody has named the strategy.

And look at everyone else. Daydream has the fashion brain but no hardware. Pinterest has the best taste data in the world but no commerce and no device play. Meta has the glasses but no shopping layer. The pieces are sitting on the table. Nobody's assembled them.

Here's what I think happens next

Somebody is going to name this category. Ambient fashion intelligence, or whatever they end up calling it. And the company that builds the product in that empty quadrant — the one that actually connects AI taste to the body — will own the next decade of fashion commerce.

Not because they built a faster checkout. Because they understood something everyone else missed: the future of fashion discovery isn't about helping people find what they're looking for. It's about expanding what they thought they wanted in the first place.

The best stylist you've ever met doesn't ask you what you want. She already knows — and shows you something better.

Yanka Kuhnler · April 2026